We are back from DevFest Toulouse, an opportunity for us to attend several conferences, train ourselves and share a personalized version of our presentation Cloner ChatGPT with Hugging Face and Elasticsearch.

After three years of absence, the DevFest Toulouse conference is back thanks to a renewed organizational team.

DevFest, or “Developers Festival”, is a technical conference for developers. It is aimed at students, professionals or simply curious technophiles. Throughout the day, speakers came to present various subjects around mobile development, the Web, Data, connected objects, the Cloud, DevOps, modern development practices. Breaks made it possible to engage in dialogue and explore the topics presented in more depth.

The selection committee was keen to offer a varied and captivating program. This day was an opportunity to meet nationally renowned speakers, but an important place was dedicated to local speakers. The DevFest is part of an international framework and is organized in partnership with Google.

DevFest Toulouse is an event organized by volunteers like our colleague Aline Paponaud. The 2023 edition took place at the Diagora Congress and Exhibition Center in Labège on November 16, 2023.

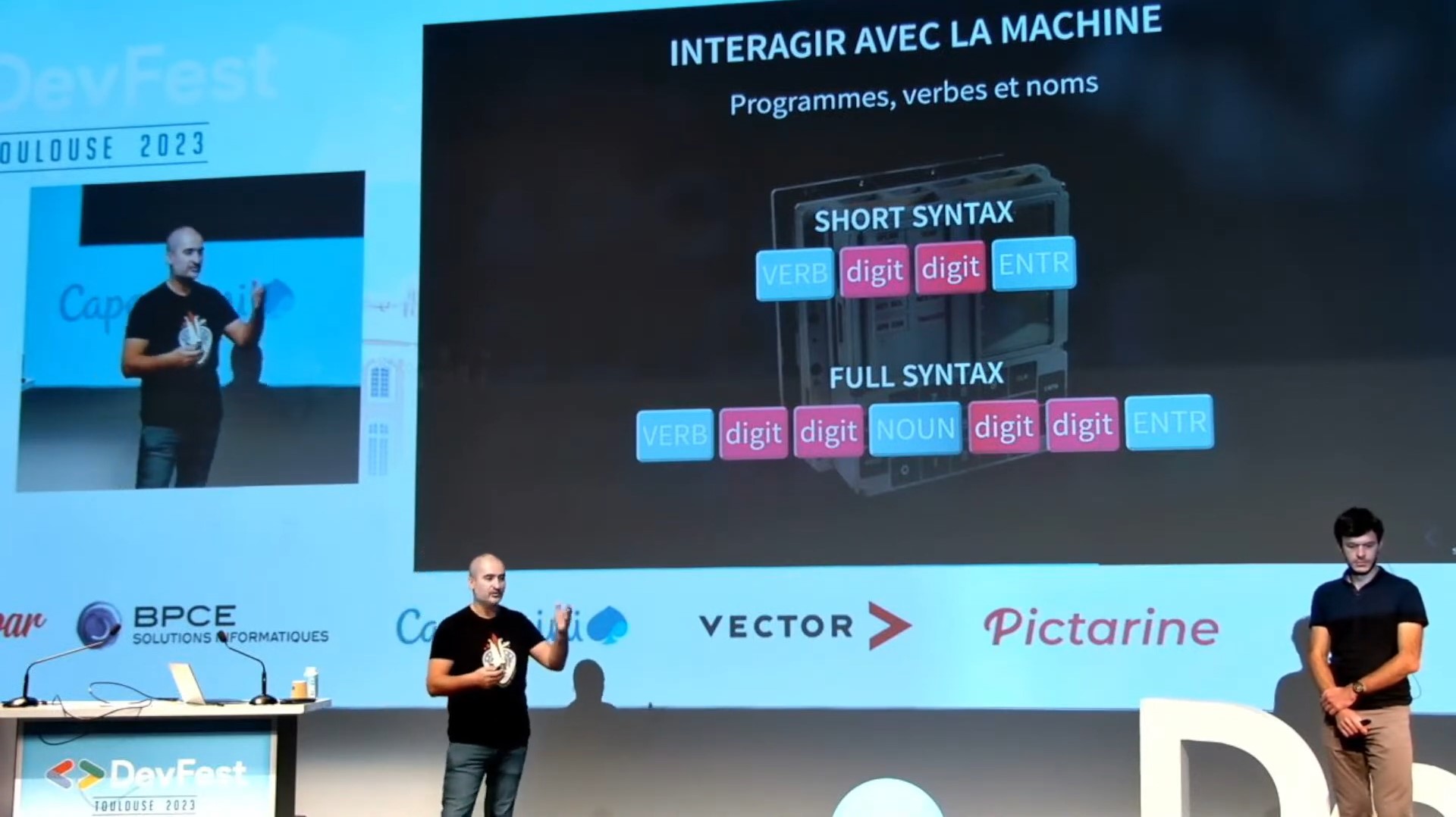

We were invited to present our conference Clone ChatGPT with Hugging Face and Elasticsearch. We explored the powerful duo of Elasticsearch and Hugging Face in our presentation. Elasticsearch, a versatile search, NoSQL database, and data analysis tool, pairs perfectly with Hugging Face, the leading platform for developing open source machine learning models. This combination creates extraordinary applications, enriching data and improving its rendering. We have illustrated this with examples and prototypes using these technologies, offering a unique opportunity to dive into the world of Hugging Face, revisit Elasticsearch and rediscover ChatGPT.

For the occasion, we have made some modifications to our presentation. To give an example of the NLP named entity extraction task, we used the names of some organizers, the locations as well as the dates of the conference, including the erratum ;-).

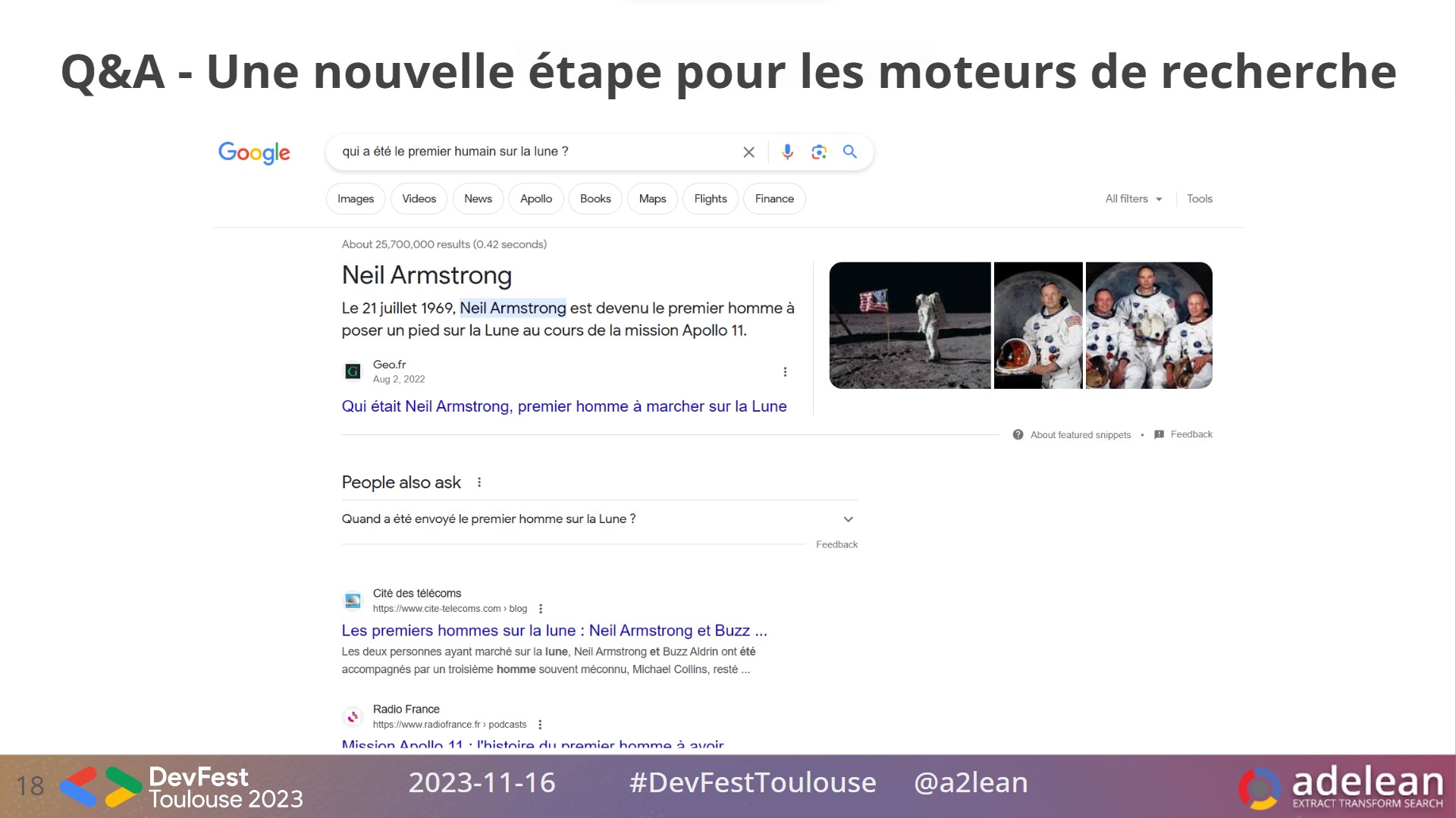

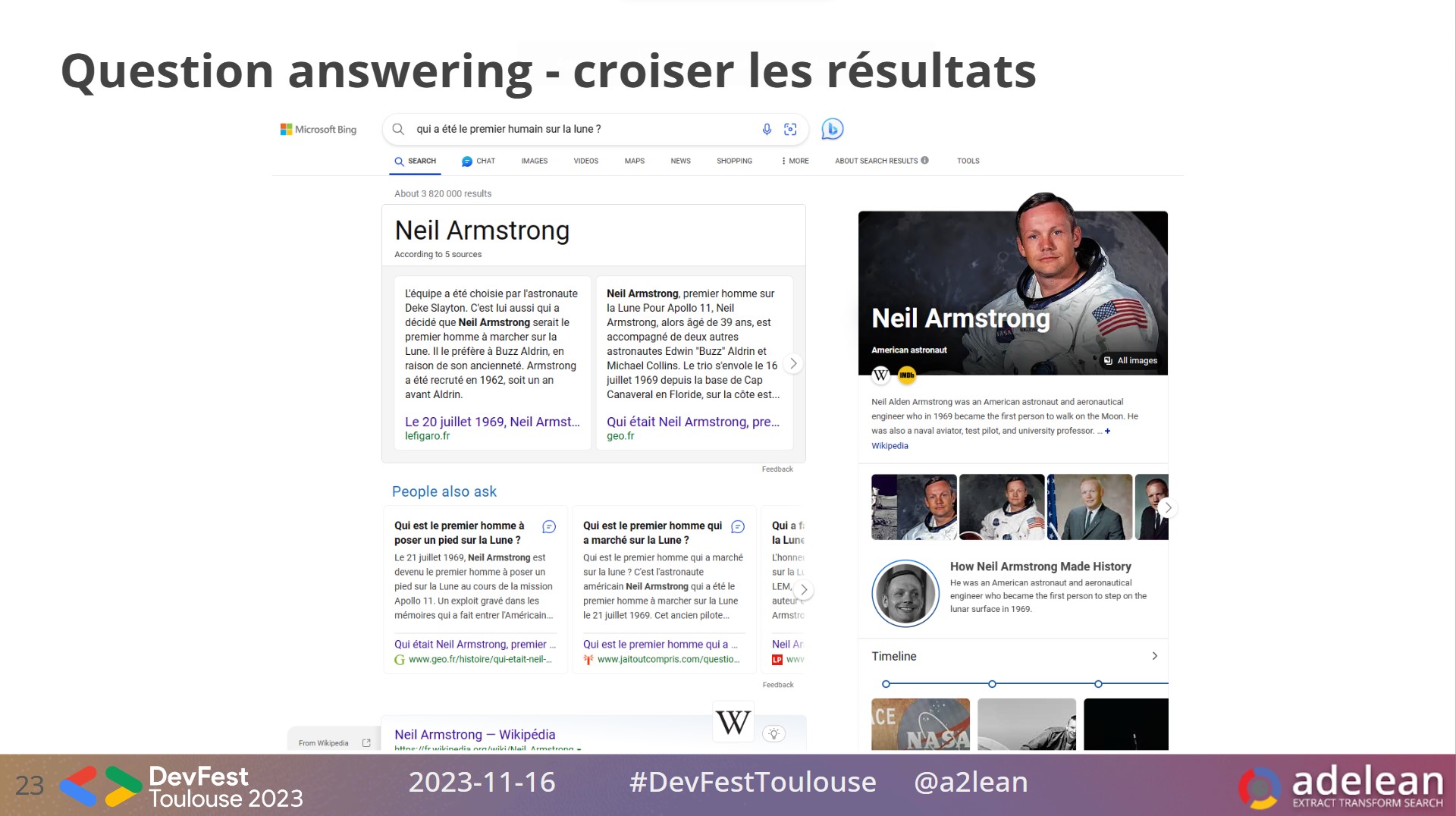

We appreciated the subject and the efforts for inclusive writing of the keynote: The AGC: return to the computer that brought humanity to the Moon. We have adapted our slides dealing with question answering. The question asked of Google was: who was the first human on the Moon?

We paid tribute to Google, pioneer of LLMs and the Transformers architecture, while highlighting the advances of the new Bing, integrating ChatGPT and citing its sources in the question answering responses, even in a context where the conference was sponsored by Google Developers Group.

The audience interaction and feedback was exceptional. Pastel 1 room was full, demonstrating the interest in our subject.

After the questions-and-answers session, we had the opportunity to interact with several participants. These interactions were enriching, allowing not only to clarify certain points of our presentation, but also to gather diverse perspectives on the use of AI technologies in different fields. We were particularly impressed by attendees’ enthusiasm to further explore the capabilities of Hugging Face and Elasticsearch in their own projects.

We quoted the keynote and here is a summary. We had the privilege of attending a fascinating conference entitled The AGC: return to the computer that brought humanity to the Moon, presented by Olivier Poncet and Romain Berthon. This presentation brilliantly highlighted the incredible technological and computing challenges overcome during the Apollo program. The Apollo Guidance Computer (AGC) was at the heart of this adventure, playing a crucial role in the success of these lunar missions.

What particularly struck us was the way the speakers placed the AGC in its historical context, detailing the technological advances of the time and the software engineering inherited from this program. The public’s feedback clearly reflects the quality of this keynote: mostly perceived as super interesting and very enriching, even if some found the technical level a little high.

One comment particularly resonated with our own reflections: the comparison between the limited resources used for these historic space missions and the current challenges of energy sobriety. This raises exciting questions about innovation and efficient use of resources, topics that are more relevant than ever. Ultimately, this conference was not only a journey into the past of space exploration, but also a source of inspiration for envisioning our technological and environmental future.

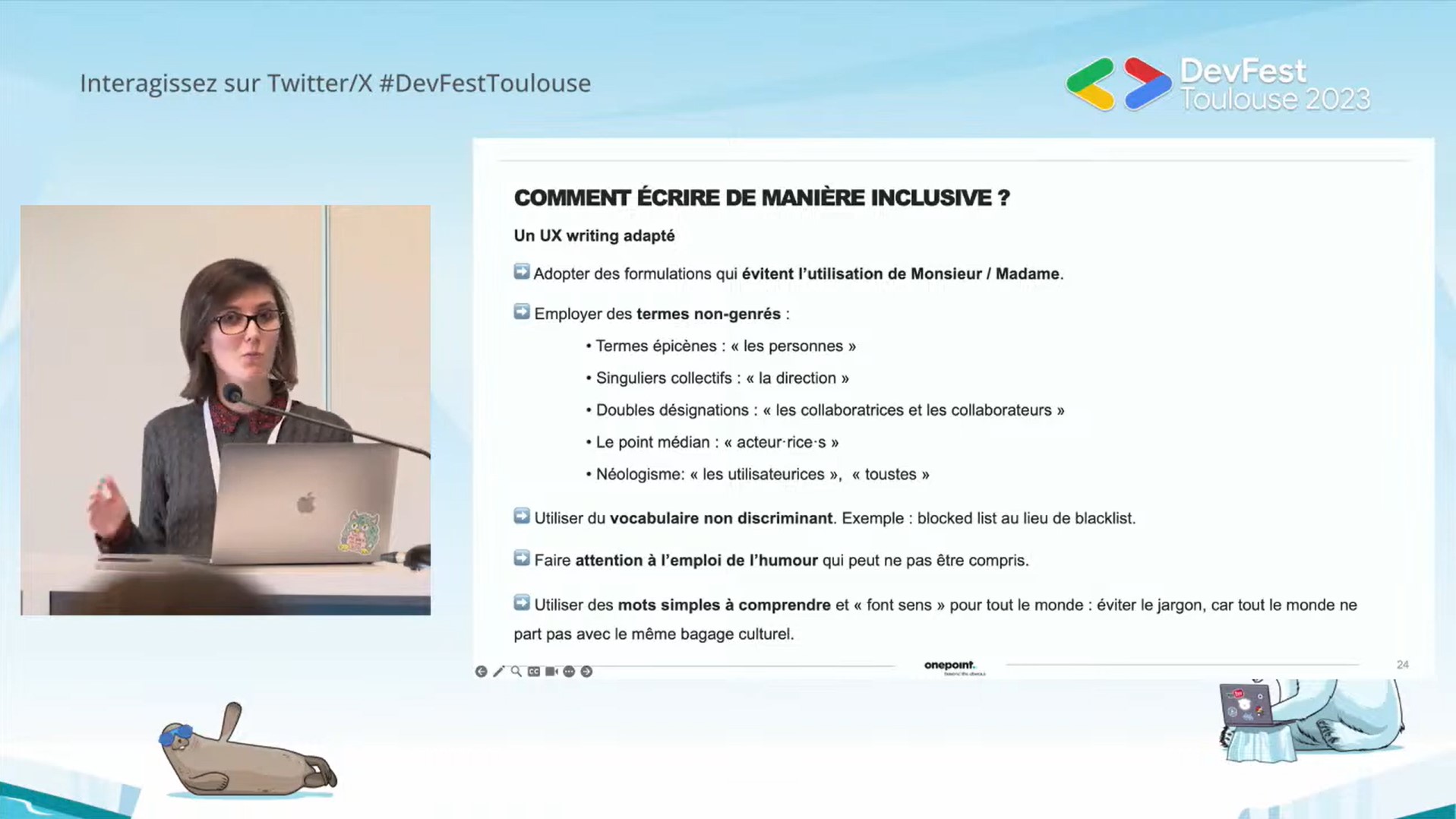

DevFest Toulouse 2023 hosted an enlightening session entitled How to include inclusiveness from the first stages of designing a digital service or product? hosted by Noémie M. Rivière. At the heart of this session, Noémie revealed the subtleties of inclusive UX, an approach which is part of the desire to create digital products which not only speak to everyone but which also represent everyone. Inclusivity, according to Noémie, is not only an ethical concept but also a practice that enriches the user experience by taking into account the diversity of audiences.

DevFest organizers have fully embraced this philosophy, taking concrete steps towards accessibility and inclusiveness across their website and conference agenda. They also ensured that the information was accessible, in particular by integrating automatic subtitles for the conferences in the amphitheater. These efforts show that inclusiveness is not just an add-on but an essential component of service design.

The slide below from Noémie’s presentation is our take-away for implementing inclusivity in digital projects. Emphasis is placed on the adoption of fair formulations, the use of non-gendered terms, and the use of vocabulary that respects all users.

This conference was not only enriching and interesting but also a powerful reminder that inclusiveness must be integrated from the genesis of any digital project.

We had the pleasure of attending the fascinating conference by Guillaume Laforge entitled Generative AI through practice: concrete cases of use of an LLM in Java, with the PaLM API. The Hemicycle room was the scene of an enriching exchange on the potential of Large Language Models (LLM) for Java developers, usually further away from the Python universe, traditionally associated with AI.

The public’s feedback was eloquent: 6 votes for “Funny and original”, 7 for “Very enriching”, 5 for “Super interesting”, and a remarkable 8 votes for “Very good speaker”. These evaluations reflect the dynamic atmosphere and general appreciation of the session.

Our impressions are equally positive, with a special mention for the infographics presented. They were not only aesthetically pleasing but also incredibly informative, covering varied topics such as the evolution of LLMs from Transformers in 2017 to PaLM 2 in 2023, or the nuances between Artificial Intelligence, Machine Learning, Data Science and Deep Learning .

We particularly appreciated the approach of Guillaume Laforge, who knew how to use humor and expertise, while involving the public, without fear of a demo effect. Even when he addressed the limitations of Google models, he did so with lightness and positivity. His anecdote and generated history demonstration about Tux the penguin and invading cats, although risky, added a personal and humorous touch to the presentation.

Guillaume also mentioned LangChain4J, an important project illustrated in the YouTube video Java Meets AI: A Hands On Guide to Building LLM Powered Applications with LangChain4j By Lize Raes, and Google’s training program Generative AI | Google Cloud, offering valuable resources to delve deeper into the subject.

In conclusion, this conference was not only a source of inspiration for Java developers interested in Generative AI, but also a great example of how to make technical topics accessible and entertaining.

Our experience at DevFest Toulouse was extremely positive. We not only shared our knowledge and expertise, but also learned a lot from the community. We left inspired and eager to apply new ideas in our future projects. A big thank you to the organizers for a memorable event!

We had the chance to present our work on language models, vector search and conversational search engines. Thank you for the constructive feedback that the participants gave us.

And if you have read this far, we thank you and invite you to follow our work, to apply to join our team or use our services. At Adelean, we value continuing education, knowledge sharing and innovation.