Elastic{ON} Paris 2026 arrived with a bold promise of shaping the future of search. From the stage of the Maison de la Mutualité, one message stood out clearly the future of Elasticsearch is agentic. Beyond this vision, the event delivered concrete product updates, architectural evolutions, and real-world use cases that show how Elastic is evolving across search, observability, and security.

This year, Adelean once again took part in the landmark ElasticON Paris conference. For the second year in a row, we played a leading role, contributing to the Community Track and engaging directly with the Elastic community.

The conference highlighted Elastic’s advancements in search, security, and observability. Notably, the shift toward agentic AI features a refined user experience, specifically engineered to lower the barrier to entry for new users and long-term customers alike.

The day before the main conference, the Adelean team dove into a series of Elastic-branded workshops, focused on Security, Observability, and Search. These sessions offered an early look at the AI capabilities defining Elastic’s latest releases, ranging from generative AI integrations to fully agentic approaches.

The opening keynote reinforced this direction, outlining a roadmap centered on precision and innovation.

Elastic continues to lower the barrier to entry for advanced AI-powered search. A clear example is semantic_text, now generally available, which simplifies the entire semantic search workflow—from indexing to query time—by abstracting much of the underlying complexity. This focus on simplicity extends to model integration. Following the acquisition of Jina AI, its state-of-the-art embedding models are now directly available through Elastic’s Inference API, enabling seamless download and immediate use without additional configuration. Performance is the next layer of improvement. The integration with NVIDIA cuVS brings GPU acceleration to vector search, significantly speeding up large-scale similarity computations while preserving accuracy and flexibility. But innovation is not only about ease of use and speed—it is also about scale. With DiskBBQ, Elastic introduces an IVF + BBQ on-disk architecture designed to reduce computational overhead for massive vector datasets. This approach makes semantic search viable and efficient even for deployments exceeding a billion vectors.

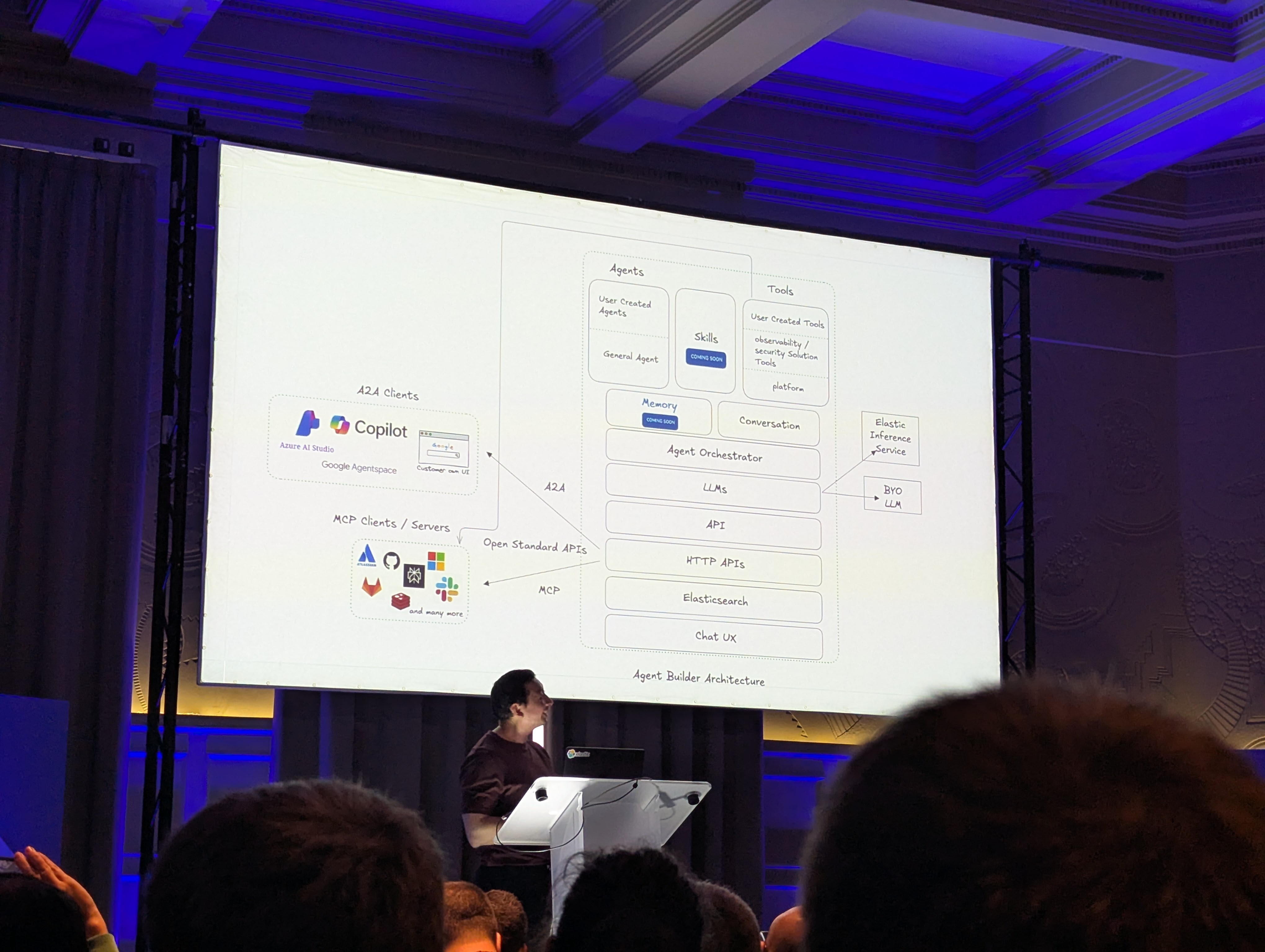

Perhaps the most transformative announcement was the introduction of the Elastic Agent Builder. This visual tool within Kibana allows users to define custom tools, orchestrate workflows, and build agents tailored to specific tasks, democratizing access to complex AI interactions.

This agentic approach extends deep into Observability, where Elastic demonstrated agents capable of analyzing logs, partitioning data, and filtering events to automate previously tedious workflows.

Beyond AI, Elastic introduced CLP (Compressed Log Processing). This new feature works alongside LogsDB and TSDB to offer superior log compression and storage efficiency for large-scale data.

Following the keynote, the consensus is clear: the future of AI is undeniably agentic. As we navigate this shift, protocols like MCP (Model Context Protocol) and A2A (Agent-to-Agent) will serve as the essential infrastructure guiding our path.

A key takeaway regarding A2A was highlighted in the presentation, “The Future of Building AI Agents in Elasticsearch”, by Joe McElroy.

Here, Elastic introduced the concept of “Skills”—essentially a collaboration language. Skills enable agents to broadcast their specific capabilities and share tools, allowing them to work together seamlessly to achieve user goals.

However, effective agents require more than just communication skills; they require context. As shown in the presentation “Managing the Memory of a Conversational Agent with Elasticsearch”, memory management remains a critical bottleneck as systems grow in complexity.

Traditional libraries like LangChain often cap memory at a fixed token limit (e.g., 34k), causing early context to vanish. While summarization offers a workaround, it frequently sacrifices the granularity needed for complex tasks.

To solve this, we can look to the COALA study, which frames agent memory through the lens of human cognition. In practice, this means moving away from simple token buffers and towards indexing the entire conversation history for semantic retrieval.

The framework distinguishes between three types of memory:

Implementing this “infinite memory” requires smart strategies, such as chunking conversations with rich metadata (User IDs, tool calls) and utilizing vector search for external file access

Of course, with increased complexity comes the need for better visibility. The conference highlighted a dual-index strategy for observability: storing workflow executions in one index and granular node-level details in another. This separation allows developers to analyze agent behavior without sacrificing performance, ensuring our agents remain not just smart, but predictable and transparent.

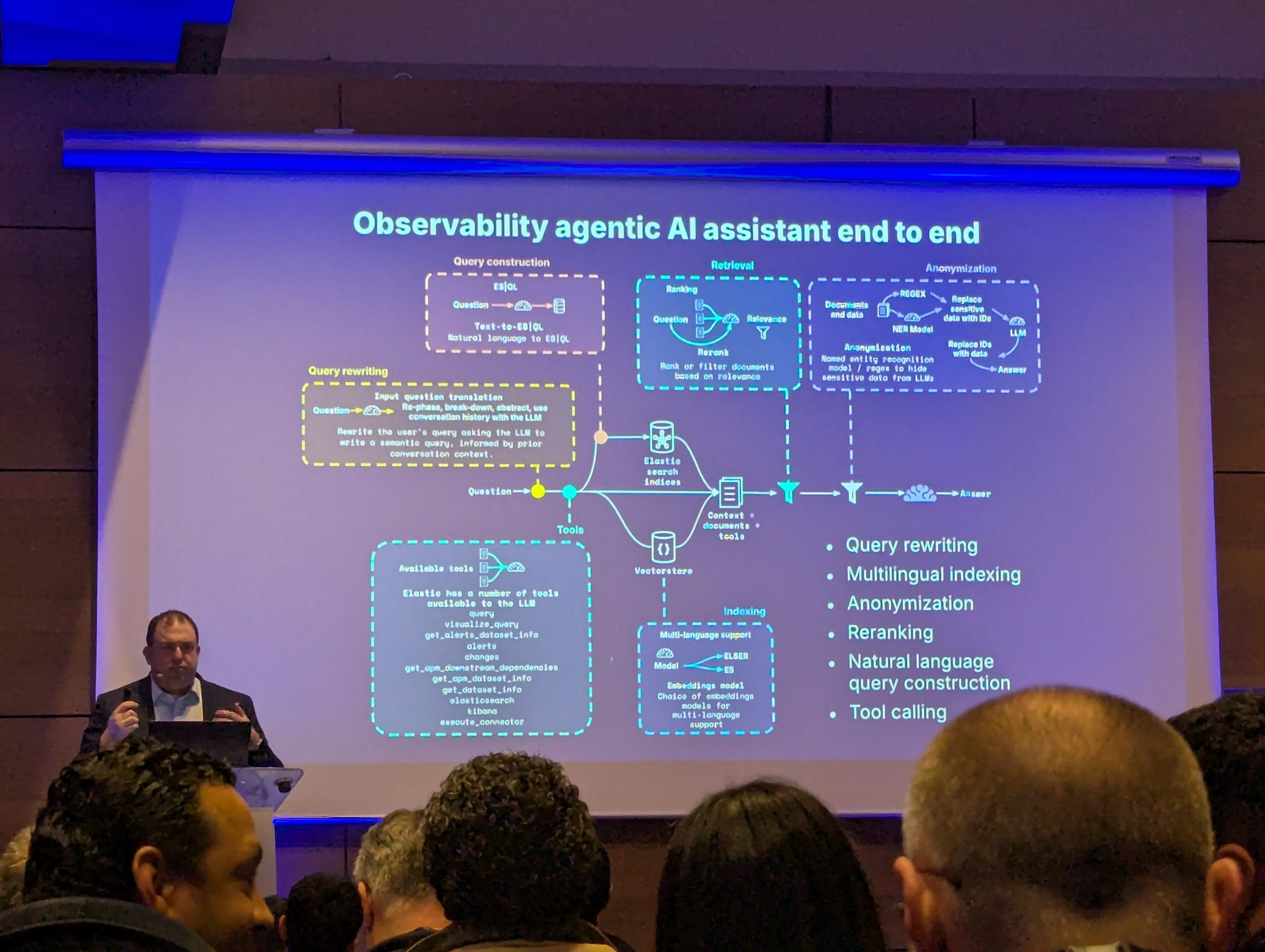

As mentioned previously, the agentic revolution isn’t limited to building search applications, it also extends to observability and security.

Consider the classic “2 a.m. nightmare”: a latency spike appears on your dashboard following a routine patch. In situations like this, the bottleneck isn’t monitoring, it’s the time-consuming investigation and coordination required to fix it.

To address this challenge, Elastic is augmenting its existing capabilities (log categorization, anomaly detection, trace analysis) with a new layer of intelligence.

In the talk “From SLO breach to root cause: AI for modern observability” by Drew Post, we discovered how AI can be leveraged to cut through the noise and surface plausible hypotheses instantly.

By giving an AI Assistant access to domain-specific information, it can reason over the data to pinpoint root causes. A critical differentiator here is transparency: every action the agent proposes is expressed in ES|QL. This allows human operators to easily review the agent’s work in detail before approving it.

Key capabilities discussed include:

The future evolution of these capabilities is what Elastic calls AI Investigations. This approach will decouple detection from recovery: alerts indicate that something is wrong, while remediation decisions (manual or automated) are determined independently and do not need to mirror the alerting conditions.

At 14:35, it was showtime for Adelean. Benjamin Dauvissat and Pietro Mele took the stage to present “Fleet Invaders.”

The goal of the session was straightforward: to demonstrate that managing infrastructure at scale doesn’t have to be a battle. The duo showcased just how seamless Elastic Fleet is to install and maintain, positioning it as the ideal solution for teams tasked with monitoring diverse ecosystems—spanning multiple machines and services—without compromising on security.

Across the various sessions, we witnessed the confirmation of a trend observed over the last two years: ES|QL is establishing itself as the reference language. It is proving to be the most comprehensive tool available, covering data analysis, observability, and security, as well as document search. Many new operators were presented:

To conclude, here our takeaways:

The Future is Agentic: We are shifting away from simple chatbots toward autonomous agents that can plan, collaborate (via protocols like A2A and MCP), and execute workflows. Elastic is democratizing this with tools like the Agent Builder, making advanced AI accessible without deep complexity.

Memory is the New Frontier: To build truly useful agents, we must move beyond fixed token limits. By adopting the COALA framework (Episodic, Procedural, Semantic) and leveraging Elasticsearch to index full conversation histories, we can give agents “infinite memory”, making them smarter and more context-aware over time.

Ops, from Detection to Action: In the world of observability, AI is becoming a proactive teammate. With AI Investigations and native OpenTelemetry storage, the focus is shifting from simply flagging alerts to automated root cause analysis and remediation—always with ES|QL providing a transparent audit trail.

Simplicity at Scale: Whether it’s managing agents or monitoring infrastructure, the tools are getting sharper. As demonstrated in our “Fleet Invaders” session, managing diverse ecosystems with Elastic Fleet is becoming easier and more secure, proving that scale doesn’t have to mean complexity.

Ready to make your infrastructure agentic? The Adelean team is here to help you navigate this shift.